Check for extra spaces, newline, extra characters etc and remove them.

The public key is set properly in /home/sshuser/.ssh/authorized_keys file. If you get the above error messages, ensure the following: Please validate host connection using the specified ssh tool directly and try again.Įrror: Fail to fetch remote MRS version text output is: Unable to open connection:, Host does not exist

#How to get putty on windows 7 verification#

Please validate the host connection credential file.Įrror in checkSshReturnMessage(printOut) : Host key verification failed. You can download the fix and instructionsįew other possible error messages and troubleshooting steps:Įrror in checkSshReturnMessage(printOut) : Access denied. We have released Hotfix to address the above error messages.

Using RClient 3.3.2/MRS 9.0.1, you might get the following error messages while running the above program:Įrror in rxFindWindowsSsh("plink.exe", "ssh.exe", sshClientDir = "") : Could not find neither plink.exe nor ssh.exe. Here is an example of using remote Spark Compute Context: You can specify the appropriate profile script by specifying the sshProfileScript argument to RxSpark (this should be an absolute path). This may be because a different profile or startup script is being read on ssh login. In some cases, you may find that environment variables needed by Hadoop are not set in the remote sessions run on the sshHostname computer.

:max_bytes(150000):strip_icc()/windows-7-install-5-58070cd23df78cbc28bed273.jpg)

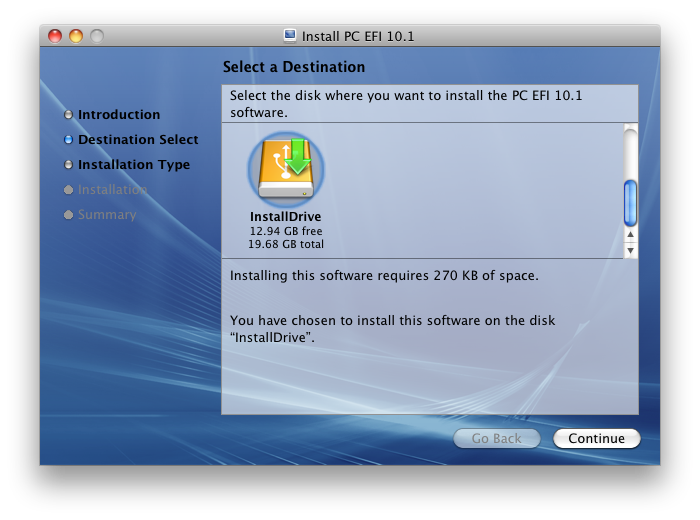

If not, you can specify the location of these files using the sshClientDir argument. RxSpark assumes that the directory containing the plink.exe command (PuTTY) is in your path. To create the compute context, but use additional arguments to specify your user name, the file-sharing directory where you have read and write access, the publicly-facing host name or IP address of your Hadoop cluster’s name node or an edge node that will run the master processes, and any additional switches to pass to the ssh command (such as the -i flag if you are using a ppk file for authentication). , you can create a compute context that will runįrom your local client in a distributed fashion on your Hadoop cluster.